Which should you use? Consider ETL if you’re creating data repositories that are smaller, need to be retained for a longer period and don’t need to be updated very often. Then, it is subsequently loaded into a data repository where it can be used. With ETL, after the data is extracted, it is then defined and transformed to improve data quality and integrity. It also lets you load datasets from the source. This allows data transformation to happen as required. With ELT, raw data is then loaded directly into the target data warehouse, data lake, relational database or data store. Examples include an enterprise resource planning (ERP) platform, social media platform, Internet of Things (IoT) data, spreadsheet and more. They use the same steps in a different order for different data management functions.īoth ELT and ETL extract raw data from different data sources. ELTĮxtract transform load and extract load transform are two different data integration processes. Next, it loads the data into a centralized location where it can be accessed on demand.Ĭloud ETL is often used to make high-volume data readily available for analysts, engineers and decision makers across a variety of use cases. It then consolidates and transforms that data. The data could be in on-premises or cloud data warehouses. Cloud ETLĬloud, or modern, ETL extracts both structured and unstructured data from any data source type. That often forces organizations to choose between detailed data or fast performance. Plus, traditional ETL systems can’t easily handle spikes in large data volumes. To extract the necessary data analytics, IT teams often create complicated, labor-intensive customizations and exact quality control. Real-time analysis can be hard to achieve. This ideally occurs when traffic on the network is reduced. Their job is to create and manage in-house data pipelines and databases.Īs a process, it generally relies on batch processing sessions that allow data to be moved in scheduled batches.

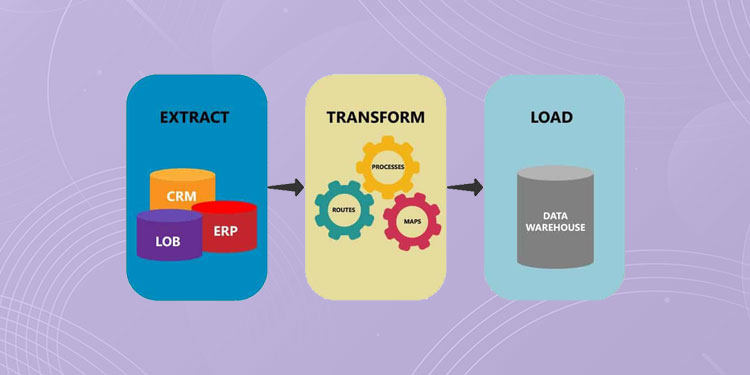

Traditional or legacy ETL is designed for data located and managed completely on-premises by an experienced in-house IT team. Many organizations regularly perform this process to keep their data warehouse updated. Once all the data has been loaded, the process is complete. This could be a target database, data warehouse, data store, data hub or data lake - on-premises or in the cloud. Loadįinally, the load phase moves the transformed data into a permanent target system.

It also makes the data fit for consumption for analytics, business functions and other downstream activities. This helps to normalize, standardize and filter data. Typical transformations include aggregators, data masking, expression, joiner, filter, lookup, rank, router, union, XML, Normalizer, H2R, R2H and web service. The goal of transformation is to make all data fit within a uniform schema before it moves on to the last step. In the transformation phase, data is processed to make its values and structure conform consistently with its intended use case. Data that fails validation is rejected and doesn’t continue to the next step. This tests whether data meets the requirements of its destination. It is then held in temporary storage, where the next two steps are executed.ĭuring extraction, validation rules are applied. Here’s a quick summary: ExtractĮxtraction is the first phase of “extract, transform, load.” Data is collected from one or more data sources. This could be a database, data warehouse, data store or data lake. ETL moves data in three distinct steps from one or more sources to another destination.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed